How Do Your Customers Feel About Your App?

Performance monitoring essentially is trying to answer the question, "Is everyone happy?" Or "relatively happy." Or how many people are unhappy right now… When you monitor your web applications you may have several tools each with different sets of data or use one tool but it takes many measurements, or you may be trying to look at groups of items that all have different ideas about happiness. Happiness in this case is mostly about response times from different pieces of the application. End users are happier when they perceive things as fast.

Measuring Customer Satisfaction

So, how do you reconcile all these different bits of information about pieces of your application to answer if your customers are satisfied? Well, you need a measurement system that can level out various measurement types and groups of things.

Luckily, there is a standard methodology to apply to this problem from Apdex.

Apdex is a numerical measure of user satisfaction with the performance of enterprise applications. It converts many measurements into one number on a uniform scale of 0-to-1 (0 = no users satisfied, 1 = all users satisfied). This metric can be applied to any source of end-user performance measurements. If you have a measurement tool that gathers timing data similar to what a motivated end-user could gather with a stopwatch, then you can use this metric. Apdex fills the gap between timing data and insight by specifying a uniform way to measure and report on the user experience.

The index translates many individual response times, measured at the user-task level, into a single number. A Task is an individual interaction with the system, within a larger process. Task response time is defined as the elapsed time between when a user does something (mouse click, hits enter or return, etc) and when the system (client, network, servers) responds such that the user can proceed with the process. This is the time during which the human is waiting for the system. These individual waiting periods are what define the "responsiveness" of the application to the user.

The index is based on three zones of application responsiveness:

- Satisfied: The user is fully productive. This represents the time value (T seconds) below which users are not impeded by application response time.

- Tolerating: The user notices performance lagging within responses greater than T, but continues the process.

- Frustrated: Performance with a response time greater than F seconds is unacceptable, and users may abandon the process.

Apdex values fall between 0 and 1 where, 0 means that no users are satisfied, and 1 indicates that all user samples were in the satisfied zone. Clearly, a higher number is better. But not all applications will achieve or need to receive a perfect value of 1. The alliance has developed a simple rating scale that provides a quick assessment of an application. The ratings, along with Apdex values that define them are shown below.

Performance Equals How Your Customers Feel

This means that you can compare all the different bits of information along the same scale performance, where performance equals how happy your users are.

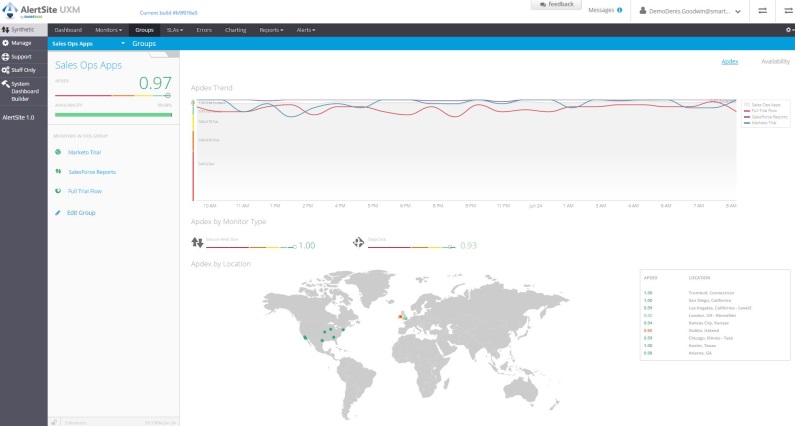

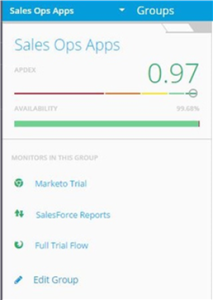

So now let me tell you how we incorporated this into AlertSite. We introduced some new views and dashboards a few weeks ago and one of the capabilities we offer is to group things together according to how you want to look at them. For Example you might want to see everything related to one specific application, that means the api monitor that the app consumes, the shopping cart, and the entire application transaction. In order to see at a glance if that application is keeping users happy, you need to compare each part of the application performance. Hence a group of items is scored with Apdex.

Why do we like Apdex?

Apdex is a great way to depict aggregate performance of Groups. By allowing monitors with disparate response times to be evaluated on a  common scale you get two wins: 1. the ability to aggregate apples and oranges into a single group score and 2. the ability to compare apples and oranges in a meaningful way.

common scale you get two wins: 1. the ability to aggregate apples and oranges into a single group score and 2. the ability to compare apples and oranges in a meaningful way.

Apdex is essentially a hybrid metric. It encompasses elements of both performance and availability into a single metric. Apdex doesn't care whether you're monitor is slow or failing, it evaluates each run of a monitor against an appropriate threshold to determine the health of that run. So the apdex score gives you a sense of the health of applications monitored by one or several monitors regardless of whether they are running fast or slow, or suceeding or failing.

While we like Apdex a lot, we know that there isn't a single metric or status algorithm that's suitable for every situation. SmartBear plans to introduce options for both threshold and status determination for dashboards, alerts, and reports.

What are your thoughts on comparing measurements and understanding performance scores?